Tech transfer is a re-entry tax. The process didn't change; the paperwork did.

Tech transfer is where a process, defined in development, becomes a process executed in GMP manufacturing. It is where a process, proven at one site, starts running at a second. It is where a sponsor hands a process to a CDMO, and where the CDMO hands execution back. Nothing about the science changes during a transfer. Almost everything about the documentation does.

The transfer itself is where programs run over schedule. Timelines of six to eighteen months are the industry norm. Consultants are hired at $300/hour. Parameter spreadsheets are assembled. Equipment equivalency is argued through slide decks. Site readiness is tracked in a SharePoint folder named "Tech Transfer V7 FINAL.xlsx." None of this produces a better process; it produces proof that you have a process, stitched together by hand from documents that were never designed to talk to each other.

The perverse thing is that this cost reappears at every transfer event: dev to GMP, site to site, sponsor to CDMO, and CDMO to new CDMO when the sponsor switches manufacturers. Each time, the same parameters are re-keyed, the same rationale is re-explained, the same equivalency arguments are re-assembled. Institutional knowledge that ought to compound across transfers evaporates with every handoff instead. Experienced engineers become bottlenecks because only they remember where the 2022 DOE that justified the pH range actually lives.

The problem isn't transfer — it's that process lives as documents instead of data

The root cause is that process definitions live as documents, not as data. A Word SOP or a PDF master batch record is a snapshot in time. To transfer a process, you read the snapshot and re-create it in the target system. By hand, line by line, parameter by parameter. Every re-creation introduces drift. Every drift requires reconciliation. Every reconciliation adds weeks. Every reconciled document spawns its own derivative documents. Site-specific MBRs, training SOPs, validation protocols. Each of which has to stay in sync with the original as the original continues to evolve.

The incumbent response is to layer more documentation on top: transfer protocols, equipment equivalency matrices, risk assessments, gap analyses, site readiness checklists, training requirements, technology transfer reports. Each lives in its own file, version-controlled by filename, kept in sync with the underlying process by hand. When the process changes — a CPP range tightens, an equipment vendor substitutes a component, a new site is added — every downstream document has to be reviewed and updated. The system doesn't propagate; humans do.

Audit trail fragmentation is the second hidden cost. The development ELN has its own audit trail. The manufacturing MES has another. The quality management system has a third. The statistical tool used for CPP justification has a fourth. When an inspector asks why a parameter was set where it was set, the answer lives in audit trails spread across three to five systems. Reconstruction is manual and error-prone. Every audit becomes a scavenger hunt, and experienced engineers become the index: only they remember what lives where.

Process definitions carry forward as structured data, not re-keyed parameters

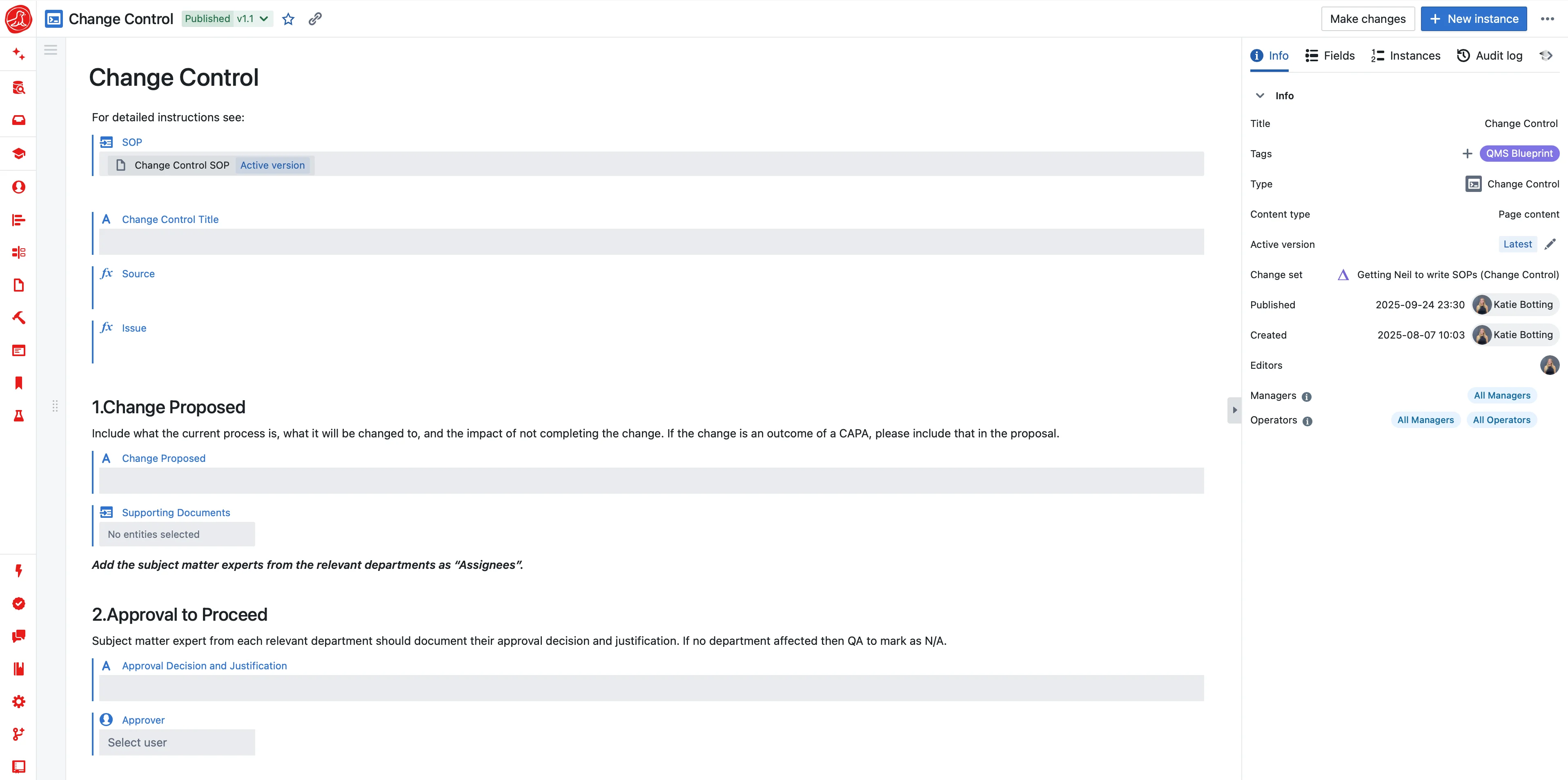

Seal treats the process as a structured, version-controlled asset. Not a document. A unit operation, an analytical method, a material specification is defined once and composed into a Platform Process that defines what the process is and why. A Site-Specific Process binds that platform to a specific facility: 2000L bioreactor in Boston, 5000L in Dublin, 1000L in Singapore. Same platform, three configurations. When the platform improves, the improvement is available to every site. When a site-specific parameter changes, the scope of what needs re-review is explicit, not inferred from a CC-all email.

The Platform Process is composed of reusable, versioned building blocks. A unit operation carries its own inputs, outputs, parameters, and quality attributes. An analytical method carries its validation state and acceptance criteria. A material specification references the same catalogue used by procurement and receiving. These blocks are not copies of each other. They are canonical references, so a change made once propagates wherever the block is used, with change control enforced at propagation time rather than after the fact.

This is the mechanism that makes tech transfer stop being a project and start being a promotion. You don't re-key parameters at the target site. You bind the target site's configuration to the platform, and execution inherits the definition. The master batch record is generated from the process definition, not typed up in parallel to it. When regulators ask to see the process, you don't hand them a collection of documents. You hand them a traversable data model whose links from parameter to development study to batch to deviation are inspectable in seconds.

Why development and GMP must live on the same platform

Most software in this space positions itself as a layer that connects process data across your existing tools. A "digital thread" on top of your ELN, MES, LIMS, and QMS. The pitch is that your tools stay in place and a separate lifecycle tool threads data across them. The pitch sounds reasonable until you look at the details.

That model fails where the details matter. The thread breaks at every system boundary. Development work is authored in the ELN with its own data model, versioning, and change control. A layered tool reads that out, holds it in a separate data model, pushes it to the MES which re-interprets it, and re-emits the results back through the thread. Every crossing adds translation overhead. Every system has its own audit trail. The thread is only as strong as the weakest integration, which in regulated environments is always weaker than you want.

Worse, the development tool and the manufacturing tool were built for different users with different priorities. So every concept that exists in both — a unit operation, a CPP, an equipment specification — has two slightly different definitions, two change histories, and two sets of people who think theirs is canonical. When a scientist tightens a CPP range in the ELN, it takes a change-control cycle in the layered tool to update the "thread," and another cycle in the MES to receive the change. Each cycle has its own approvers, its own timelines, its own risk of getting stuck. A process improvement that should propagate in hours takes weeks.

own audit

own CC

own audit

own CC

own audit

own CC

own audit

own CC

- Same concept (unit op, CPP) has 2+ definitions across systems

- Change in ELN doesn't propagate — it goes through a translator

- Audit trail splits: which system's is canonical?

- Every system its own users, permissions, SOPs, validation

- Integration breaks require revalidating the integration

- Vendor lock-in compounds: 5 systems, 5 vendors, 5 roadmaps

same audit

same CC

same audit

same CC

same audit

same CC

same audit

same CC

- Unit operation in ELN = unit operation in MBR (identical record)

- CPP tightened in dev → propagates by change control, not translation

- One audit query, one answer — no reconciliation

- One set of users, permissions, SOPs across the lifecycle

- No integration to break; no integration to revalidate

- One vendor, one roadmap, one contract

Seal takes a different approach: Process Development and GMP Manufacturing run on the same platform. When a scientist defines a unit operation in the ELN, the GMP master batch record inherits the same data model — not a translation of it, the data itself. When a CPP range tightens during process characterization, the change propagates through one change-control workflow on the same platform, not through an integration layer. Equipment equivalency, analytical methods, material specs, and operating ranges all live in one authoritative place, with a single version history, a single audit trail, and a single permissions model.

This is not a thread layered on top of separate systems. It is a single system that spans the lifecycle. The distinction is the difference between "your data is reconciled across tools" and "your data is the tools." The first architecture accumulates integration debt forever; the second eliminates the category of problem.

For organizations that genuinely can't consolidate on one platform — because an incumbent system is deeply embedded in an existing validated process, or because a contract manufacturer mandates specific tools — Seal can operate alongside those systems rather than replacing them. Structured process definitions, AI extraction for legacy documents, and changeset review still deliver value. But the full benefit of a unified architecture requires a unified architecture. Teams that try to get "most of the benefit" from a layered approach usually end up rebuilding the integration themselves within eighteen months.

AI extracts your legacy documents into a changeset you review like a pull request

Most organizations live in the messy middle: thousands of existing development documents in PDFs, JMP files, Excel workbooks, and PowerPoint decks. The transfer program cannot pause for two years while the archive gets restructured. Seal ingests these documents and AI extracts the structured records — CPPs, operating ranges, rationale, experimental context — into a changeset. You review the changeset the way you review a pull request: see every proposed change, edit what's wrong, approve what's right. Nothing enters the database without human verification. The AI does not invent process; it transcribes it.

The changeset model is deliberately boring. Every proposed extraction is a diff: what's being added, what's being modified, what's being linked to what. Reviewers see the source document alongside the extracted record. If the AI identified the pH range correctly, you accept. If it pulled the wrong value, you correct it in-line. If it proposed a parameter that shouldn't be there, you reject it. The database only updates when a human approves. There is no black-box model output going directly to the process definition.

This is the step that makes the rest of the platform useful for real organizations with real history. Greenfield deployments are rare; most buyers have a decade of work in disconnected documents. Instead of requiring a two-year migration project, Seal lets you extract value incrementally. The first tech transfer you do after adoption benefits from whatever you've extracted; the second benefits more; by the third, the archive is live data and the transfer is a promotion.

Equipment equivalency as a first-class entity, not a Word attachment

Tech transfers between sites stand or fall on equipment equivalency arguments. Does a 2000L Sartorius bioreactor perform equivalently to a 2000L Thermo bioreactor? What compensates for differences in impeller geometry? Does a Tangential Flow Filtration skid from vendor A produce comparable shear stress to vendor B? Today these arguments live in Word documents attached to transfer protocols, with rationale trapped in appendices that nobody reads during the next transfer.

In Seal, equipment equivalency is a first-class entity linked to process definitions and to specific parameter ranges. When a new site proposes non-identical equipment, the system surfaces which parameters are affected, which development studies support the substitution, and which risk assessments still need to be generated. Equivalency becomes reviewable in one place, not reconstructed for each audit.

This matters most for scale changes and vendor changes. A 5000L scale-up from a 2000L pilot is not a simple arithmetic exercise: mixing dynamics, mass transfer, and heat removal all change non-linearly. Seal captures the engineering arguments that justify scaling as structured data, linked to the specific parameters that are scale-sensitive. When a future transfer proposes an even larger scale, those arguments are available as starting points — not as archaeology dug out of a shared drive.

Multi-site: global standards, local flexibility

Multi-site organizations face a fundamental tension: global standards enable efficiency and regulatory consistency, but local sites have legitimate differences. Traditional approaches force uniformity, which produces shadow systems and processes that exist on paper but not in practice. Sites that can't legitimately meet a global standard find ways to comply on paper while operating differently.

Seal's answer: global building blocks define the what and why; local sites configure the how. Sites own their execution while inheriting traceability to global definitions. Improvements propagate. Differences are explicit, not implicit.

When the global team decides to tighten a parameter range based on new data, the change is proposed against the Platform Process. Each site's Site-Specific Process sees the proposed change, evaluates local impact (does our equipment support the tighter range? do our operators need retraining?), and either accepts the change or requests an exception. Exceptions are documented structurally — not as shadow SOPs, but as explicit, justified, time-bound deviations from the global standard. Regulators can see both the global standard and the local state without needing a side meeting with the site quality lead to translate.

Transfer without re-validation

The biggest cost hidden inside tech transfer is re-validation. When you can't demonstrate that the target process is identical to the source, you validate it from scratch: engineering runs, PPQ batches, new validation reports, updated regulatory dossiers. Each costs months and requires clean manufacturing slots the site often doesn't have.

When you can demonstrate identity — because both sites run from the same platform definition with scoped site-specific bindings — the scope of re-validation collapses to the genuine differences, not every line item. A transfer where only the 2000L bioreactor model differs from a pilot is a transfer that validates the scale-up, not every CPP.

- pH operating range — re-validate

- Temperature — re-validate

- Dissolved oxygen — re-validate

- Agitation (RPM) — re-validate

- Harvest criteria — re-validate

- Viable cell density — re-validate

- Feed strategy — re-validate

- Gas flow rates — re-validate

- pH control strategy — re-validate

- Base addition schedule — re-validate

- pH operating range (inherited)

- Temperature (inherited)

- Harvest criteria (inherited)

- Viable cell density (inherited)

- Feed strategy (inherited)

- Gas flow rates (inherited)

- pH control strategy (inherited)

- Base addition schedule (inherited)

- Agitation (RPM) — Dublin impeller geometry differs

- Dissolved oxygen — sparger type differs

Regulators support this approach. ICH Q12 explicitly enables Established Conditions: the parameters that require regulatory filing, distinguished from operational ranges that can change under the pharmaceutical quality system. Seal models this distinction natively. Changes within operational ranges happen through change control without regulatory filing. Changes to Established Conditions trigger the filing workflow automatically. The platform encodes what regulators actually require, rather than defaulting to the most conservative interpretation at every step.

From eighteen months to weeks — and what the team gets back

Programs that used to take 18 months from dev handoff to first GMP batch complete in weeks. The mechanism is consistent across the saved time: parameter re-entry disappears, equivalency arguments are reviewable in one place, re-validation scopes to real differences, and audit readiness is a property of the data rather than a quarterly fire drill.

Investigation and change-control cycles close faster because parameters and their rationale are queryable, not locked inside Word attachments. When an operator runs out of tolerance on a batch, the investigation team has the parameter's development history in one click. When a change is proposed, the impact assessment draws on structured batch history rather than a manual batch-record search.

CDMO programs deliver client updates from live data, not from three-hour PowerPoint preparation. Client meetings become discussions about the science instead of status catch-ups about whether the data is current. New engineers onboard against a system rather than against institutional memory. The person who knew where the 2022 DOE lives is no longer a bottleneck. Regulators inspect a system that answers their questions natively rather than a stack of documents that has to be manually indexed.

Seal can operate alongside existing ELN, MES, LIMS, and QMS systems, or serve as an all-in-one platform. The value is the same: a process definition that carries forward, with AI extraction for legacy documents and changeset review for everything that enters the system. The difference in outcomes grows with the scope of consolidation — same-platform architecture compounds benefits that layered architectures cannot deliver.