Approved But Never Finished

CC-2024-047 was approved on January 15th. The change request had perfect documentation. A clear description of the proposed modification to the tablet coating process, a thorough rationale explaining why the change was needed, a comprehensive impact assessment listing all affected procedures. R&D signed off. QA signed off. Regulatory signed off. Operations signed off. The approval workflow completed in three days. Everyone felt good about their change control process.

Six months later, an auditor asked to see the SOP that was supposed to be updated as part of that change. It still showed the old process. The process that was supposed to have been replaced in January. The training that was supposed to happen? Never scheduled, never completed. The validation protocol that was supposed to verify the new process? Never executed. The change control record showed "Approved" with a green checkmark.

The change was approved. It was never implemented. The approval was confused for completion.

The Approval Trap

Most change control systems end at approval. They manage the process of getting sign-offs. Routing the change request through the right reviewers, collecting the right signatures, documenting the right justifications. When all the signatures are collected, the system declares success. Change approved. Change closed.

But approval doesn't change anything. It authorizes a change. The actual change. Updating the procedure, retraining the operators, requalifying the equipment. Happens after approval. And that's where organizations fail.

Implementation tasks get assigned and forgotten. The SOP update goes to a technical writer who gets pulled onto another project. The training goes to a supervisor who is short-staffed and keeps postponing. The validation protocol goes to an engineer who assumes someone else is handling the execution. Without rigorous tracking, tasks slip through cracks.

When auditors examine your change control system, they don't just verify that changes were approved. They verify that changes were implemented. Show me the updated procedure. Show me the training records. Show me the validation results. If you can't produce these, your change control system failed. Regardless of how many signatures you collected.

Implementation as the Core Function

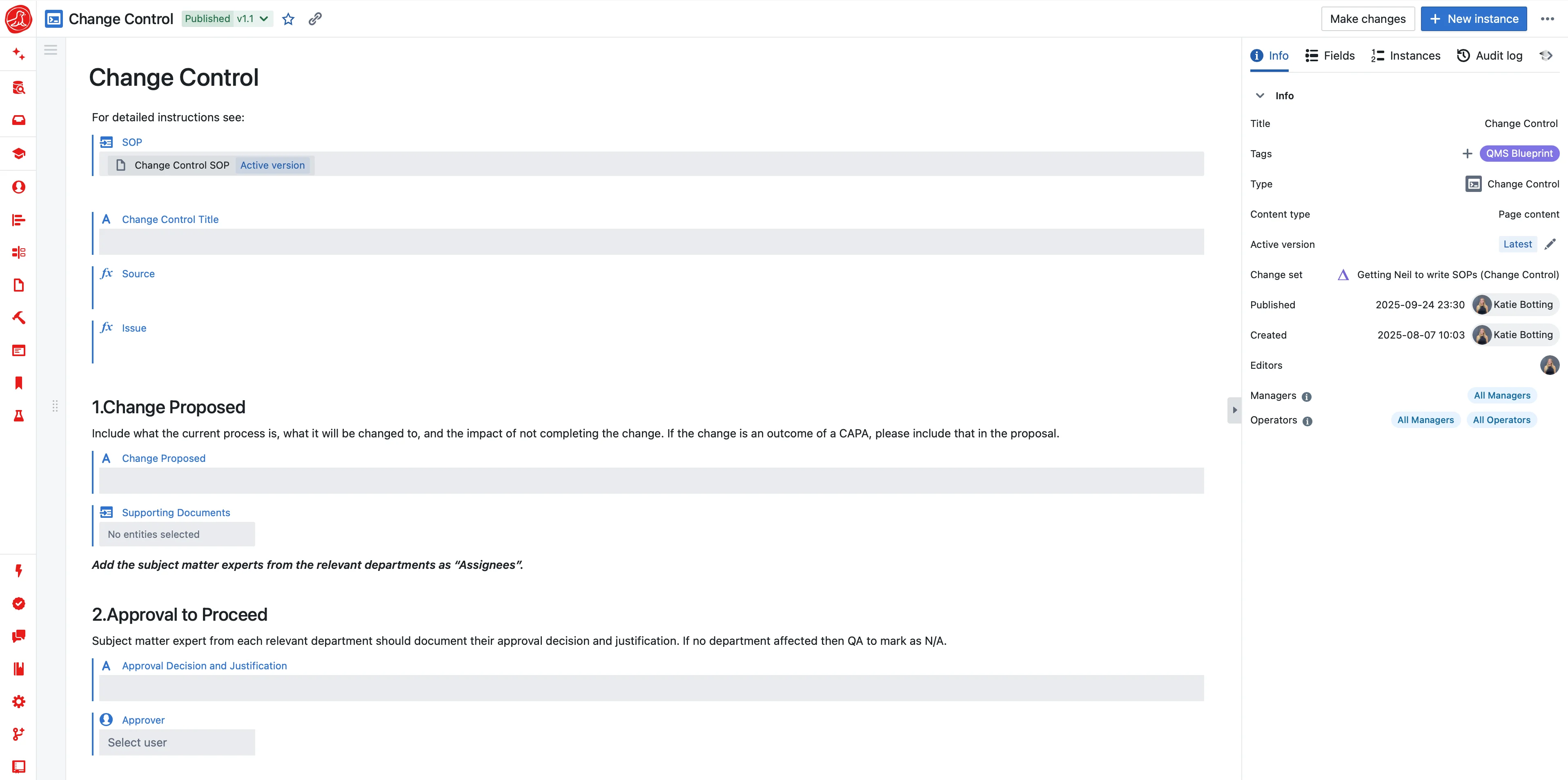

Seal treats implementation tracking as the core function of change control, not an afterthought. Approval authorizes the change. Implementation makes it real. The change cannot close until implementation is complete.

Every change generates implementation tasks based on its impact assessment. If a procedure must be revised, that's a task with an owner and a deadline. If personnel must be retrained, that's a task. If equipment must be requalified, that's a task. Each task requires evidence of completion. The revised procedure, the training records, the qualification summary.

If a task owner goes on vacation, the system doesn't lose track. Approaching deadlines generate reminders. Overdue tasks escalate to supervisors, then to quality leadership. The task doesn't disappear because the person responsible forgot about it.

The change status reflects implementation status, not just approval status. "Approved" isn't the final state—"Implemented" is. Everyone can see which changes are approved but not yet implemented, which implementation tasks are overdue, which changes are stuck waiting on specific tasks. Visibility drives accountability.

Connected Impact Assessment

When you propose a change, what does it affect? In most systems, this is a judgment call. The change initiator lists what they think is affected. They might miss things. They might list things that aren't actually affected. The impact assessment is only as good as the initiator's knowledge and diligence.

In Seal, impact assessment leverages actual data relationships. You're changing this piece of equipment. The system identifies which procedures reference it, which batch records use it, which training curricula include it, which validation protocols cover it. The impact isn't guessed. It's computed from your actual data.

The initiator still reviews and refines the impact assessment. The system might identify impacts that aren't actually relevant. The initiator might know about impacts the system missed. But the starting point is comprehensive, not empty.

This matters especially for changes with broad impact. A change to a shared utility affects many procedures. A change to a common raw material affects many batch records. A change to a software system affects many processes. The system surfaces impacts that a busy initiator might overlook.

Classification That Fits the Change

Not all changes require the same rigor. A correction to a typographical error in a procedure doesn't need the same review as a modification to a critical process parameter. Classification determines the appropriate level of control.

Major changes affect product quality, regulatory status, or validated state. They require cross-functional review. R&D, QA, Regulatory, Operations all evaluate the proposal. Impact assessment is comprehensive. Implementation is extensive. Effectiveness review is mandatory.

Minor changes are local in scope. A procedure clarification that doesn't change the process. An equipment replacement with an identical model. These need review and approval, but the workflow is streamlined. QA and the process owner may be sufficient.

Administrative changes are corrections and updates that don't affect content. Updating a document number. Correcting a name. These need minimal review. Just enough to maintain the controlled document system.

Classification happens at initiation based on defined criteria. The system suggests classification based on change type and affected items. Reviewers can adjust if the suggested classification doesn't fit. Classification drives workflow automatically. Major changes route through cross-functional review, minor changes through streamlined review, administrative changes through expedited approval.

Emergency Changes

Equipment fails unexpectedly. A supplier discontinues a critical material without warning. A safety issue requires immediate process modification. You can't wait for normal change control. Production is stopped, patients are waiting.

Emergency changes allow implementation first, documentation after. Designated personnel can authorize emergency implementation when the situation requires it. The change happens immediately to address the urgent need. Formal change control proceeds in parallel, with all the normal requirements. Impact assessment, review, approval. But not as a gate to action.

The key is that documentation catches up. Emergency changes don't bypass change control; they reorder it. Within a defined timeframe, the emergency change must have complete documentation equivalent to any other change. If documentation doesn't catch up, the emergency change becomes an open issue requiring resolution.

Emergency change authorization is limited to defined personnel and defined circumstances. The system tracks emergency changes separately, flags them for review, and ensures they don't become a way to avoid normal controls.

External Triggers

Not all changes originate internally. Suppliers change their materials or processes. And if those changes affect your product, you need to respond. Customers change their requirements. And if you're a contract manufacturer, you need to adjust. Regulatory agencies update guidance or requirements. And you need to evaluate applicability.

External triggers initiate internal change control. A supplier notification arrives. Is this change relevant? Does it affect your process? If yes, initiate a change request, conduct impact assessment, implement required responses. Track your response to the external trigger. When you were notified, how you evaluated, what you changed, when you completed.

This creates bidirectional traceability. From the change request, you can see what external trigger initiated it. From the external trigger, you can see what internal changes responded to it. When an auditor asks how you manage supplier changes, you show them the system.

Effectiveness Review

Implementation completes the change. But did the change work? Did it achieve its intended purpose? Did it create new problems you didn't anticipate?

Effectiveness review answers these questions. Three months after implementation, six months after implementation. The timing depends on the change and what would indicate success or failure. Did the process change improve yield as expected? Did the procedure revision eliminate confusion? Did the training reduce errors?

Seal schedules effectiveness reviews automatically based on change type and defined intervals. The review is assigned, conducted, and documented. If the change worked, capture that confirmation. If the change didn't work. The intended improvement didn't materialize, or new problems emerged. Capture that finding and determine next steps. Maybe the change needs modification. Maybe additional changes are required.

Effectiveness review closes the loop. It transforms change control from "we changed things" to "we changed things and verified the changes achieved their purpose." That's the standard auditors expect.

The Change That Actually Happened

The auditor asks about CC-2024-047. You show them the change record. Initiation, impact assessment, approval. You show them the implementation tasks. SOP revised, training completed, validation executed. You show them the evidence. The revised procedure, the training records, the validation report. You show them the effectiveness review. Three months later, confirmed that the new process performed as expected.

The change was approved. It was implemented. It was verified effective. That's change control.