The CAPA That Never Actually Worked

The FDA investigator asks to see CAPA-2023-047. You pull it up confidently. Opened fourteen months ago after a sterility failure in Suite B. Root cause analysis determined that an operator didn't follow proper aseptic technique during a critical transfer step. Corrective action: additional training for all Suite B operators on aseptic technique. Action completed April 15th. Effectiveness verification checked off May 1st. CAPA closed May 2nd. Everything looks complete.

Then the investigator pulls up your deviation log and applies a filter. Sterility failures. Past twelve months. Three failures appear. All in Suite B, all during transfer steps, all in the past six months. The investigator clicks into each deviation. The operators involved all completed that additional training in April.

The CAPA is closed. The problem isn't fixed. The training didn't work. The root cause wasn't what you thought it was. You've been congratulating yourself on a closed CAPA while the same failure mode continues.

483 observation: "The firm's CAPA system is inadequate. CAPA-2023-047 was closed without verifying that the corrective action was effective. Similar deviations continued after CAPA closure."

The Checkbox That Lies

Most CAPA systems have an "Effectiveness Verification Complete" checkbox. Click it and the CAPA closes. No evidence required. No criteria defined upfront. No waiting period to see if the fix actually worked. No comparison to baseline. Just a checkbox that someone clicks because enough time has passed and the CAPA is aging on a report.

This checkbox lies. It says effectiveness was verified when all that actually happened was that someone clicked a button. The verification wasn't defined, wasn't conducted, wasn't documented. The system accepted the click because the system doesn't know the difference between actual verification and administrative closure.

When the FDA investigator asks how you verified effectiveness, you have nothing to show. No criteria. No evidence. No actual verification. Just a checked box and a hope that the problem went away.

Effectiveness as a Gate

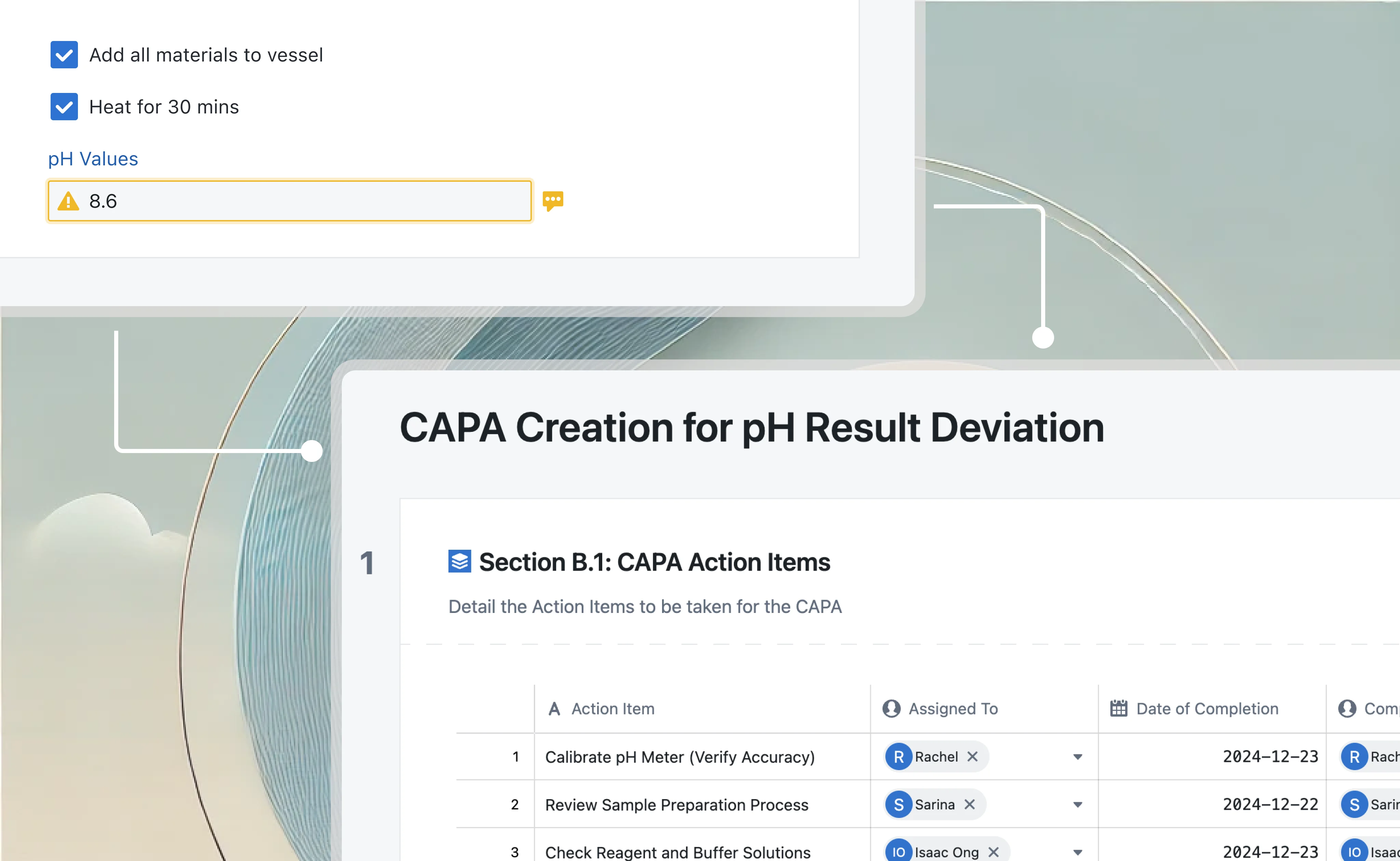

In Seal, effectiveness verification is a gate, not a checkbox. The CAPA cannot close until verification is complete, and verification requires actual evidence.

When you open a CAPA, you define what effective looks like. Specific criteria: "Zero recurrence of sterility failures attributable to aseptic technique in Suite B." Measurable outcomes: "Reduction of Suite B aseptic-related deviations from current rate of 4 per quarter to less than 1 per quarter." You define how you'll verify: review of deviation log, categorized by root cause, filtered to Suite B. You define when you'll verify: after three months of operation post-implementation, after 50 batches in the suite, whatever makes sense for the situation.

The verification isn't a checkbox. It's a scheduled activity with defined method, assigned owner, and due date. When the verification date arrives, someone actually conducts the verification. Reviews the deviation log, checks the batch records, examines the data. They document what they found. Did we meet the criteria? Is there evidence that the corrective action worked?

If yes, the CAPA closes with actual evidence of effectiveness. If no, the CAPA doesn't close. You didn't fix the problem. Back to investigation.

When the Fix Doesn't Work

When effectiveness verification fails, the CAPA reopens automatically. This isn't an administrative burden. It's information. Your root cause analysis was wrong. Your corrective action didn't address the actual cause. The problem persists because you were solving the wrong problem.

Back to root cause analysis. Go deeper. Why didn't training work? Maybe the operators knew the technique. They passed the training assessment. Maybe the problem isn't knowledge; it's environment. Suite B has a ventilation issue that creates positive pressure during transfers. Training can't fix an engineering problem.

The cycle continues until the fix actually works. A CAPA isn't successful because you closed it. It's successful because you verified the problem is gone. If you have to reopen it three times before finding the real root cause, that's three iterations of learning. The alternative. Closing it prematurely and letting the problem continue. Is worse.

Finding the Real Root Cause

"Operator error" isn't a root cause. It's a label that stops investigation before investigation finds anything useful. Why did the operator err? What conditions led to the error? What would prevent the error in the future?

The operator skipped a step in the procedure. Why? The step was buried in a long paragraph and easy to miss. Why was it buried? The SOP was written years ago by someone who didn't follow good documentation practices. Why didn't anyone fix it? No one reviewed the SOP since it was written. Why not? The company doesn't have a periodic review process for procedures.

Five whys deep, you find something systemic. Something you can fix. Something that, once fixed, prevents not just this error but similar errors in other procedures. The root cause isn't the operator who skipped a step. The root cause is the absence of a review process that would have caught the poorly written procedure years ago.

Seal provides structured root cause analysis tools. 5-Why chains that force you to keep asking. Fishbone diagrams that examine all categories. Equipment, Process, People, Materials, Environment, Measurement. Before settling on a cause. Is/Is-Not analysis that identifies patterns. The tools don't find root causes for you, but they prevent premature conclusions and shallow analysis.

From Symptoms to Systems

You've opened 200 CAPAs this year. They feel like 200 separate problems. But when you categorize them, patterns emerge.

Forty percent involve training gaps. Operators who didn't know the current procedure. Operators who weren't trained on updated processes. Operators who completed training but couldn't apply it.

You've been treating these as forty individual CAPAs. Each one investigates, identifies a training gap, implements training as corrective action, and closes. But it's one problem: your training program doesn't work. It doesn't ensure competency. It doesn't keep pace with process changes. It doesn't verify that training translates to performance.

If you fix the training program. Really fix it, with competency assessment, timely updates, and verification of on-the-job performance. You prevent forty percent of future CAPAs. Not by closing them faster, but by not having them at all.

Trending analysis in Seal surfaces these patterns. Which categories appear most frequently? Which areas generate the most CAPAs? Which root causes recur? Quality leadership sees the forest, not just the trees. Systemic CAPAs address systemic causes. Fix the system, prevent the symptoms.

The Source Connection

CAPAs don't emerge from nowhere. They're triggered by something. A deviation, an audit finding, a complaint, a near-miss, a risk assessment. The triggering event provides context that investigation needs.

In many systems, this connection is a reference number in a text field. "This CAPA was initiated due to DEV-2023-156." If you want to understand the deviation, you search for it in a different system, hope you find the right one, and manually correlate.

In Seal, the connection is a live link. The CAPA record shows the source deviation with its full context. What happened, when, where, what was affected, what initial investigation found. When you analyze root cause, you have the source evidence at hand. When you verify effectiveness, you can check whether similar deviations have occurred since correction.

The connection also works in reverse. From the deviation, you can see which CAPA was initiated, what root cause was identified, what correction was implemented, whether effectiveness was verified. The deviation and its resolution are one connected story.

Action Tracking

Every CAPA requires actions. Things that must be done to correct and prevent the problem. Update a procedure. Retrain personnel. Modify equipment. Change a process. Install monitoring. Each action needs an owner, a deadline, and evidence of completion.

Action tracking in Seal is rigorous. Each action is assigned to a specific person who is accountable for completion. Deadlines are dates, not "when we get to it." Evidence requirements are defined. What documentation proves this action is complete?

Approaching deadlines generate reminders. Overdue actions escalate. To the owner's supervisor, to quality leadership if the overdue continues. Actions don't slip quietly into the backlog. The system surfaces them and demands resolution.

The CAPA doesn't close until all actions are complete with required evidence. You can't close a CAPA while a key action sits unfinished. The gate is explicit and enforced.

The CAPA That Actually Worked

The FDA investigator asks to see CAPA-2023-047. You show them the investigation. How you went beyond "operator error" to find the ventilation issue in Suite B. You show them the corrective actions. Engineering modification to the HVAC system, procedure update for transfer protocol, training on the new procedure. You show them the evidence. Engineering work order completion, revised procedure, training records.

You show them the effectiveness verification. Criteria: zero sterility failures attributable to Suite B environmental conditions in the six months post-implementation. Method: deviation log review, root cause categorization. Results: eighteen batches in Suite B, zero sterility failures, verification passed. Evidence attached.

The CAPA is closed because the problem is fixed. Not because someone clicked a checkbox. Not because enough time passed. Because you verified that the corrective action worked.

That's CAPA.