The Submission That Was Wrong Before It Was Sent

Three months assembling Module 3. Specifications transcribed from LIMS into Word tables. Process descriptions written by regulatory affairs from manufacturing SOPs. Stability tables built row by row from study reports. A thousand small decisions about what to include, how to phrase it, whether this version was current.

The regulatory affairs team was careful. They double-checked every number. They cross-referenced specifications against test results. They asked manufacturing to review the process descriptions. Everyone signed off. The submission went out.

Three weeks later, an FDA reviewer question: "The potency specification in 3.2.S.4.1 states 95-105%, but the release data in 3.2.S.4.4 shows testing against 98-102%. Please clarify which specification is correct and provide the history of any changes."

Both specifications existed. At different times. The wider range was the original IND specification. During development, characterization data supported tightening to 98-102%. The CMC team updated the specification tables in Section 4.1 but missed the reference in Section 4.4. The cross-reference check didn't catch it because both numbers were technically in the documents. Just in different versions that had been merged during assembly.

This isn't carelessness. It's architecture. When source data lives in multiple systems and the submission is manual assembly, inconsistency isn't a risk. It's a certainty. The question isn't whether errors exist. It's whether you find them before the reviewer does.

The Assembly Line Problem

Walk through how most organizations build Module 3.

Specifications start in the analytical development lab, get formalized in LIMS, get transcribed into specification tables for the submission. Three copies of the same information. When a specification changes, three places need updating. They rarely update simultaneously.

Process descriptions start as manufacturing procedures. SOPs, batch records, process flow diagrams. Someone in regulatory affairs reads these documents and writes a narrative description for the submission. Translation introduces interpretation. The regulatory writer might describe a "mixing step" when manufacturing calls it "homogenization." Six months later, an inspector reads the CMC submission, walks the manufacturing floor, and asks why the terminology doesn't match.

Stability tables start as study reports from the stability lab. Someone extracts the data into Excel, formats it according to regional preferences, pastes it into the submission document. The study continues generating data after submission. The submitted tables become instantly stale. When FDA asks for updated stability data, someone rebuilds the tables from scratch.

Each transcription is an opportunity for error. Each translation is an opportunity for drift. Each manual step is a delay. The submission deadline approaches, changes freeze, and whatever inconsistencies exist become permanent.

Generated, Not Assembled

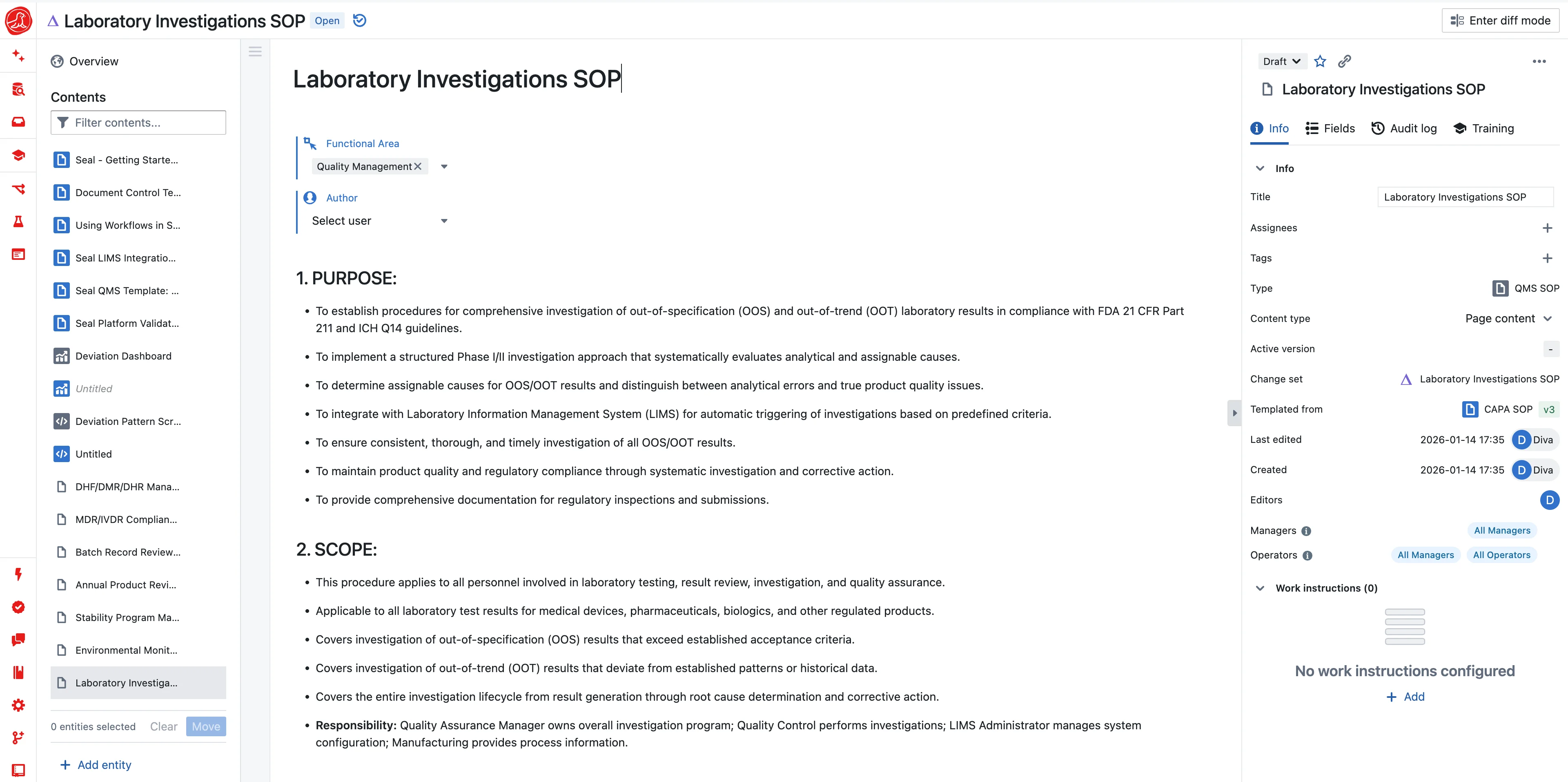

Seal generates CMC content from structured operational data. This isn't about convenience. It's about truth.

Specifications in your submission are the specifications in your LIMS. Not copies. Views. The same data object, formatted for regulatory presentation. When a specification changes, it changes once, in one place. The submission reflects reality because it's derived from reality.

Process descriptions come from process definitions in your MES. Unit operations, parameters, equipment, in-process controls. All structured data. When you need a narrative description for Module 3, the system generates it from the structure. The terminology matches manufacturing because it comes from manufacturing. When the process changes, the description reflects it automatically.

Stability tables are queries against study data, not snapshots frozen in time. New timepoints arrive, run the query again. The table updates. When FDA asks for current data, you provide current data. Not a table that was accurate three months ago.

This works because Seal is the operational system. Your batch records run here. Your stability studies run here. Your specifications release product here. The CMC submission is a view of the same data that runs your operations.

Version Control That Actually Works

Specifications evolve throughout development. Early phase limits are wide. You're still learning the process capability. Characterization narrows them. You understand what the process can consistently achieve. Validation confirms them. You prove the process holds specification across commercial scale.

Most systems track specification changes as document versions. Version 1, Version 2, Version 3. What changed between versions? Open both documents, compare manually. What drove the change? Check your change control system. If you documented it, if you can find it.

Seal tracks specifications as structured data with full history. Every change recorded with timestamp, user, and reason. What was the potency specification on May 15th? Query and answer. What drove the change from 95-105% to 98-102%? The change record links to the characterization study that justified it.

When you generate CMC content, you specify which version. The system ensures internal consistency. Every reference to that specification uses the same version. The inconsistency that killed the example submission becomes structurally impossible.

Multi-Market Without Multi-Effort

Global submissions mean multiple CMC packages. FDA wants stability tables formatted one way. EMA wants them another. PMDA has specific expectations for the Japanese market. Health Canada, TGA, ANVISA. Each with preferences.

Most organizations build separate submissions for each market. Same data, formatted five different ways, maintained as five separate document sets. A specification change means five updates. A stability data refresh means five tables rebuilt. The maintenance burden scales with the number of markets.

Seal maintains one source with multiple presentation layers. The underlying data is identical. Specifications, processes, stability, characterization. Only the formatting changes. Regional templates handle the differences. FDA formatting, EMA formatting, PMDA formatting. All generated from the same structured content.

Update the specification once. Regenerate all regional submissions. The data matches because it's the same data. The formatting differs because regions differ. The effort doesn't multiply.

When Reality Must Match the Promise

CMC submissions are promises. This is how we make the product. These are the specifications we control. This is the stability we've demonstrated. Inspectors verify that reality matches the promise.

The gap problem is universal. You wrote the CMC submission eighteen months ago based on your process at the time. Since then, you've made minor adjustments. Optimized a temperature setpoint, adjusted a hold time, refined a mixing speed. Each change went through change control. Each was minor. None seemed worth a submission update.

The inspector walks the floor. The process they observe doesn't quite match the process they read about. Not wrong, exactly. Evolved. But the CMC submission promised one thing and reality shows another. That's a finding.

Seal eliminates the gap by keeping submissions synchronized with operations. Process descriptions generate from current process definitions. When the process changes. Through proper change control. The description reflects it. You control when to update the submission. But you always know whether it matches, because you can compare at any time.

The comparison isn't manual. Query the current process definition against the submitted description. Differences surface automatically. You decide which need submission updates. But you're never surprised by what an inspector finds.

The Lifecycle After Approval

Approval isn't the end of CMC work. It's the beginning of change management.

Post-approval changes cascade through submissions. New manufacturing site. Update the site description, revalidate, demonstrate comparability. Specification revision. Justify the change, update all references, show impact on stability. Process optimization. Describe the modification, link to the data that supports it.

Most organizations treat post-approval changes as major projects. Assemble a team. Identify everything that needs updating. Manually revise each section. Hope you didn't miss anything. The variation submission takes months.

Seal tracks change impact automatically. You change a specification. The system identifies every submission section that references it, every stability study that tests against it, every batch record that released to it. Impact isn't discovered through review. It's computed from relationships.

Variation submissions generate from the same structured data. What changed? The system knows. It recorded the change. What's the impact? The system knows. It traced the relationships. What evidence supports the change? The system knows. It linked the studies.

Answering Questions Fast

Reviewer questions are inevitable. FDA wants clarification. EMA asks for additional data. PMDA requests reformatting. The quality of your response affects the quality of your relationship.

Slow responses frustrate reviewers. "We need to pull that data from archives" signals disorganization. "We'll need a few weeks to compile that analysis" signals capability gaps. Reviewers form impressions. Those impressions affect scrutiny.

Fast, accurate, well-documented responses build confidence. "Here's the data you requested" with comprehensive backup shows control. "Here's the analysis with the underlying studies linked" shows transparency. Reviewers who trust your data management ask fewer questions.

Seal makes CMC data queryable. What's the basis for this specification? Query the characterization studies. Show stability data at 25°C/60%RH for the last three years? Generate in seconds. What was the impurity profile for batch X compared to batch Y? Run the comparison. Responses derive from live data, formatted for the question, available immediately.

This isn't about impressing reviewers. It's about accuracy. When responses come from queried data rather than assembled documents, they're correct. When they're generated rather than transcribed, they're consistent. When they're fast, you don't make errors under time pressure.

Neil writes Module 3 from your operational data

Your regulatory affairs team spends months translating process data into regulatory prose. Neil. Seal's AI. Does the translation automatically. Tell Neil to generate 3.2.S.2.2 from your drug substance manufacturing process. Neil renders unit operations, parameters, equipment, and in-process controls into the narrative format regulators expect. Drawn directly from your structured process definitions, not from someone's recollection of what the process looks like.

Stability tables generate from study data. Neil formats results according to regional preferences. FDA, EMA, ICH. When new timepoints arrive, regenerate in seconds. Specification justifications draft themselves: Neil examines your characterization data and process capability and writes the rationale. You verify the logic rather than drafting from scratch.

Where Neil saves the most time is after submission. Reviewer questions arrive. Neil drafts responses from queryable data because the underlying records are still live. Post-approval changes need variations. Neil identifies which sections are affected and generates updated text. Annual reports compile automatically. The lifecycle management that used to be a manual re-assembly exercise becomes a regeneration from current data.

Getting Started

If you're running operations in Seal. LIMS, MES, stability. CMC generation works immediately. Your specifications are already structured. Your processes are already defined. Your stability data is already queryable. Module 3 content generates from what you're already doing.

If you're not, start with one component. Many organizations begin with stability. Define protocols, track timepoints, trend data. Stability tables that took hours to build become queries. When you're ready to add specifications and process definitions, the integration deepens. CMC content becomes increasingly complete as your operational data becomes increasingly structured.

You don't have to wait for your next submission. Build the structure now. When the submission deadline arrives, the content generates itself.